Intro

A few years ago, I developed a prototype of a data layer testing system, implemented it into the development process, and explained how to use it to two teams. This was an internal education portal project targeting almost the entire market in Denmark. In this post, I am going to sum it all up and talk about the key changes.

First things first, let us repeat some key points so that you could get the context. This framework was developed based on the internal code infrastructure and the technologies in use so as to automate and simplify the process of testing complex SQL queries. In particular, it enabled testing individual SQL queries and installing a clean database for a new client, as well as detecting hidden compatibility issues, first when upgrading the server from MySQL 5.1 to MySQL 5.6, and then when migrating to MariaDB.

Initially, the system was used on a small project that just included two teams; however, we needed to scale it across other projects with 15 teams. Besides, this was a single module within a single project focused on the MSTest framework, which did complicate matters.

To learn more on the system structure and how it works, please check out Part 1.

Changes

Shortly after implementation, the developers often reported issues, as the framework was either difficult or totally impossible to use. I always treated those issues seriously, trying to help them learn the tool they were new to. In my spare time, I also fixed bugs and provided improvements.

Splitting packages

The next stage was implementing our tool on another project with a much larger code size, more complicated database structure, more complex SQL queries, and a larger number of developers. Our first task was to adapt the library to a different basic testing framework. This project used NUnit, while the library was originally developed for MSTest.

As such, we had to split the entire library into multiple builds: DataLayerTests.Core, DataLayerTests.MSTestDriver, and DataLayerTests.NUnitDriver, and assemble them as separate NuGet packages. Overall, we completed migration to another testing framework without any major issues, successfully splitting and adapting the code.

The most problematic thing in creating adapters was identifying the current testing context to correctly define the attributes with metadata used in classes and test methods.

In the MSTest framework, we use the TestContext property in order to inject the dependency into a class, while for the NUnit framework, this information is available only through the TestContext.CurrentContext global variable. Using the Adapter pattern, we combined them into a common interface and implemented them depending on the base test class.

// BaseDataProviderTests.cs

public abstract class BaseDataProviderTests

{

protected ITestContextProvider TestContextProvider { get; set; }

private List<UseTableAttribute> GetUseTableAttributes()

{

Type type = GetType();

IList<CustomAttribute> attributes = type

.GetCustomAttributes<UseTableAttribute>(true)

.ToList();

if (!string.IsNullOrWhiteSpace(TestContextProvider?.CurrentTestContext?.MethodName))

{

MethodInfo testMethod = type.GetMethod(TestContextProvider.CurrentTestContext.MethodName);

if (testMethod != null)

{

attributes.AddRange(testMethod.GetCustomAttributes<UseTableAttribute>(true));

}

}

return attributes;

}

}

// BaseMSTestDataProviderTests.cs

public abstract class BaseMSTestDataProviderTests : BaseDataProviderTests, ITestContextProvider

{

protected BaseMSTestDataProviderTests()

{

TestContextProvider = this;

}

public ITestContext CurrentTestContext => new MsTestContextAdapter(TestContext);

}

public class MsTestContextAdapter : ITestContext

{

private readonly TestContext _testContext;

public MsTestContextAdapter(TestContext testContext)

{

_testContext = testContext;

}

public string TestTitle => _testContext.TestName;

public string MethodName => _testContext.TestName;

}

// BaseNUnitDataProviderTests.cs

public abstract class BaseNUnitDataProviderTests : Core.BaseDataProviderTests

{

protected BaseNUnitDataProviderTests()

{

TestContextProvider = new NUnitTestContextProvider();

}

}

public class NUnitTestContextAdapter : ITestContext

{

private readonly TestContext _testContext;

public NUnitTestContextAdapter(TestContext testContext)

{

_testContext = testContext;

}

public string TestTitle => _testContext.Test.Name;

public string MethodName => _testContext.Test.MethodName;

}

public class NUnitTestContextProvider : ITestContextProvider

{

public ITestContext CurrentTestContext => new NUnitTestContextAdapter(TestContext.CurrentContext);

}

This allowed us to minimize code duplication; as a result, the adapter packages were small, while the core framework logic still remained in the base package. The most important thing here was to maintain the correct version of the packages: since we made incompatible changes, we had to raise the version number to 2.0.

External entities

The original set of rules for generating test entities included the ForeignKeyRule rule, which allows you to create a related entity. This rule covered the needs of developers when creating new tests for various queries and data structures. At some point, however, this was no longer enough.

We had the following schema with tables to fill in so as to test the next query:

Our task was to create settings for GroupEntity and PersonGroupEntity at the same time, with the GroupEntity to CourseEntity ratio being one-to-one and PersonGroupEntity to GroupEntity, many-to-one. We tried a lot of ways of filling in the required data using the tools the library offered at this stage of development. However, we did not succeed in promptly finding a solution that would suit everyone, so, initially, we just filled in the test data manually. After a while, we had an idea of creating a new rule, similar to ForeignKeyRule, but being exactly the opposite, which I named InsideOutForeignKeyRule.

With ForeignKeyRule, an additional entity is injected into the database first, and then it is linked through assigning newly generated identifiers. In our new rule, it was quite the reverse: we injected the main entity first, and then linked and inserted the new one.

Thus, with this new rule, we got finally able to write concise entity generation code.

// InsideOutForeignKeyRule.cs

public class InsideOutForeignKeyRule<TSourceEntity, TTargetEntity, TValue> : BaseEntityRule

where TSourceEntity : Entity, new()

where TTargetEntity : Entity, new()

{

private readonly PropertyInfo _sourcePropertyInfo;

private readonly PropertyInfo _targetPropertyInfo;

public InsideOutForeignKeyRule(Expression<Func<TSourceEntity, TValue>> sourceProperty, Expression<Func<TTargetEntity, TValue>> targetProperty)

{

_sourcePropertyInfo = sourceProperty.GetProperty();

_targetPropertyInfo = targetProperty.GetProperty();

if (_sourcePropertyInfo == null || _targetPropertyInfo == null)

{

throw new InvalidOperationException("Invalid property for entity");

}

AppliedEntityTypes.Add(typeof(TSourceEntity));

}

public override void Invoke(EntityRuleContext context)

{

object sourceValue = _sourcePropertyInfo.GetValue(context.CurrentEntity);

if (sourceValue != null && !sourceValue.Equals(default(TValue)))

{

TTargetEntity targetEntity = context.DataFactory.CreateEntity<TTargetEntity>(context.EntityRuleSet, context.EntityGroupProviders, false);

_targetPropertyInfo.SetValue(targetEntity, sourceValue);

context.DataProvider.Insert(targetEntity);

}

}

}

Data insertion optimization

Initially, when we developed this framework, we assumed we would be mostly working with only one data type, so the storage for generated objects was a simple typed list, which at the last stage was sent to the database. All rules for filling and generating entities had a simple interface with only one method, Invoke(EntityRuleContext context), which could make changes to the entity before being inserted into the base. At the same time, we added more complex rules, such as ForeignKeyRule, that generated new entities of a different type. This rule generated a new entity, inserted it into the database, and used the resulting new identifier to fill in the field of the parent entity. The code below generates 21 data insertion requests, which can significantly reduce the test performance.

DataFactory

// Specify BookEntity as the main entity being generated

.CreateBuilder<BookEntity>()

// Specify the UserEntity dependency, which is 1:1 by default

.UseForeignKeyRule<BookEntity, UserEntity, int>(book => book.AuthorId, user => user.Id)

// Fill in the required fields

.UseRule(new UniqueSetterRule<UserEntity>(user => user.UserName))

.UseUniqueSetterRule(book => book.Title)

// (1*) Generate 20 BookEntity items

.CreateMany(20)

// Send the entities to the database

.InsertAll();

-

(1*) Thus, we create 20 BookEntity items and inject them into the memory, using the previously declared rules one by one. This way, we call the UseForeignKeyRule rule, which does not use any staging storage, immediately sending the new entity to the database. As a result, we get 20 requests for inserting single entities, and after calling InsertAll() we get the last 21st query to insert those 20 BookEntity items.

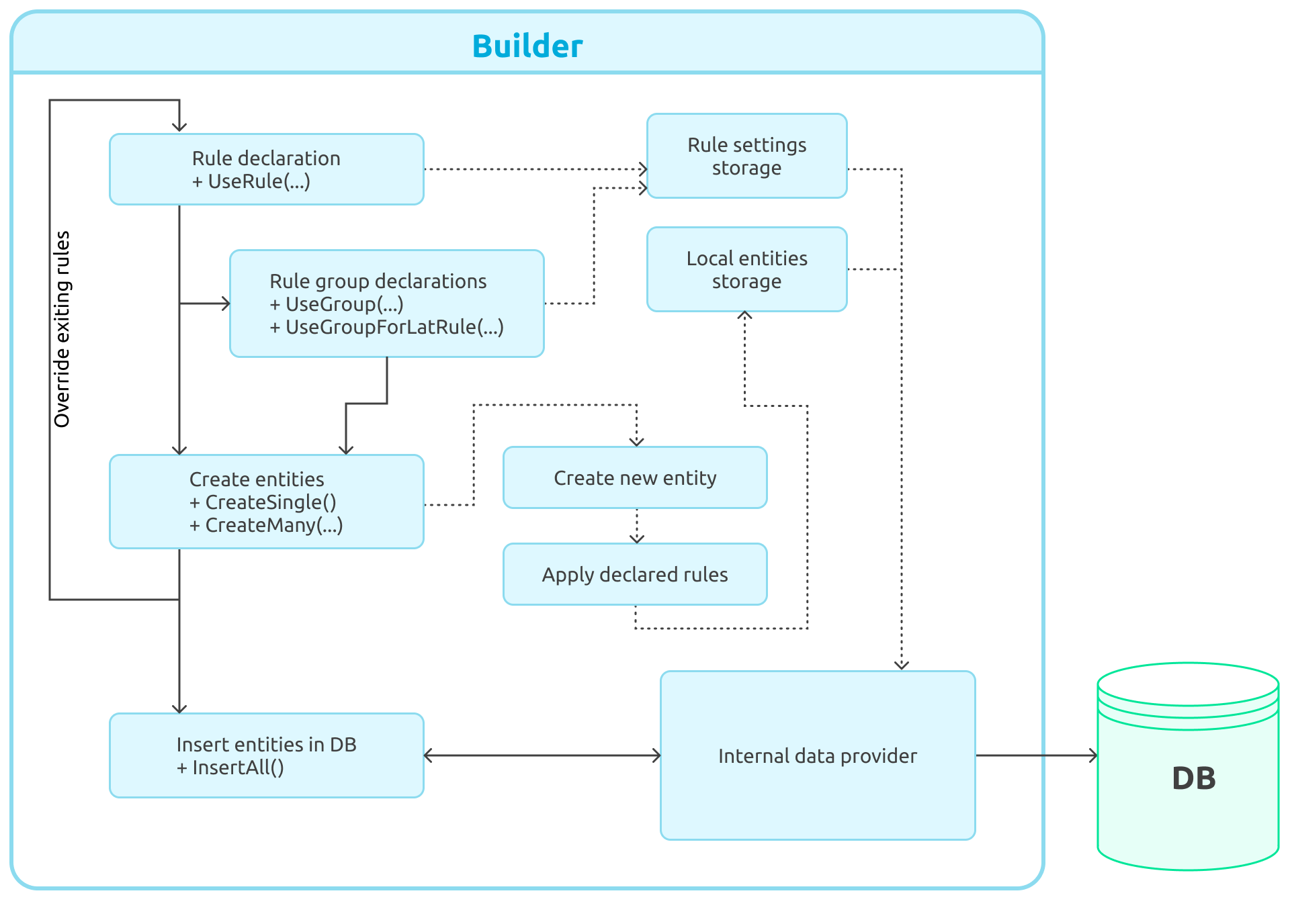

Figure 2: Entity Builder Workflow

Figure 2: Entity Builder Workflow

When I initially designed and developed the testing system, I was aware of that issue, but first I wanted to test the developed solution against a production project, get feedback, and then continue developing. Now that I can see that the library works well and does not cause any critical issues, I can finally work on optimization and enhancement.

In the new version, I created a universal storage that enables access to any type of entity. Its main feature is, however, creating and grouping data insertion requests.

Previously, the entity generator framework used direct database access, which complicated the process of reworking the generator in terms of developing a lazy write tool. This is why I moved all necessary methods to the interface, which had a special temporary storage and a class that works directly with the databas.

In order to implement a tool for lazy insertion of related entities, I had first to revise the tool for describing new data generation rules. I deemed the Invoke method dated, and currently use two new ones: OnBeforeInsert and OnAfterInsert, which are called, respectively, before and after the entity gets inserted into the database. One can also subscribe to the event of inserting an entity into the database so as to get the updated value of the Auto Increment field or insert an entity with an explicit restriction in the database based on a secondary key.

In most cases, the generation rules remained unchanged in terms of logic; however, I had to redesign the the related entity generation rule considering the new architecture. Let's see how it previously worked and how it does now.

// Previous implementation

public override void Invoke(EntityRuleContext context)

{

const string targetEntityKey = "ForeignKeyRule.TargetEntity";

object sourceValue = _sourcePropertyInfo.GetValue(context.CurrentEntity);

if (sourceValue == null || sourceValue.Equals(default(TValue)))

{

// Trying to retrieve data from the group or creating them if there are no data

TTargetEntity targetEntity = GetDataFromGroup(

context.CurrentEntityGroup,

targetEntityKey,

() => context.DataFactory.CreateEntity<TTargetEntity>(context.EntityRuleSet, context.EntityGroupProviders)

);

var value = _targetPropertyInfo.GetValue(targetEntity);

_sourcePropertyInfo.SetValue(context.CurrentEntity, value);

}

}

// Current implementation

public override void OnBeforeInsert(EntityRuleContext context)

{

const string targetEntityKey = "ForeignKeyRule.TargetEntity";

object sourceValue = _sourcePropertyInfo.GetValue(context.CurrentEntity);

if (sourceValue == null || sourceValue.Equals(default(TValue)))

{

// Trying to retrieve data from the group or creating them if there are no data

TTargetEntity targetEntity = GetDataFromGroup(

context.CurrentEntityGroup,

targetEntityKey,

() => context.DataFactory.BuildAndInsertEntity<TTargetEntity>(context.EntityRuleSet, context.EntityGroupProviders)

);

// Subscribing to additional entity insertion in order to update the original values

context.InsertionDataProvider.SubscribeOnAfterInsert(targetEntity, () =>

{

var value = _targetPropertyInfo.GetValue(targetEntity);

_sourcePropertyInfo.SetValue(context.CurrentEntity, value);

});

}

}

Redesigning test entity generator syntax

Previously, the original syntax completely suited my purposes, but after a few years of creating tests it turned out to be too cumbersome and difficult to understand. This was also confirmed with other developers I worked with. Ironically, I had to spend a lot of time myself to understand what is going on with the tests I created a few months ago.

This left me no other option than revise the internal logic of entity generation in order to make builders simple and flexible. It took me a lot to come up with and agree on a new format; as a result, however, I managed to design a convenient and lightweight syntax helps better understand the test code over time.

I am going to list the previous syntax issues here, so that you might understand why I finally opted to make changes.

- Long method names that can appear confusing to developers.

- Difficulties in managing the visibility of entity creation rules

- No option to work with multiple entities at one time

To better understand the root cause of those issues, let us take this piece of code for example.

var users = DataFactory

.CreateBuilder<UserEntity>()

// So far so good

.UseUniqueSetterRule(user => user.UserName)

// Trying to add rules for another (Book) entity

// Already confusing: what does UseRule refer to?

.UseRule(new UniqueSetterRule<BookEntity>(book => book.Title))

// More manipulations with entity types

.UseInsideOutForeignKeyRule<UserEntity, BookEntity, int>(user => user.Id, book => book.AuthorId)

// ... After creating N additional rules…

// Which entity are we creating?

.CreateMany(5)

.InsertAll();

As you can see, the issue is that all the rules are linear, they are not grouped in any way, and while declaring main entities looks simple, a lot of unnecessary things appear for all the rest. By adding scopes of rules that enable temporarily overriding the entity data, the code became even harder to both understand and debug. Let's see another an example:

DataFactory

.CreateBuilder<BookPermissionEntity>()

// Another example of hard to understand syntax

.UseRule(new ForeignKeyRule<BookPermissionEntity, BookEntity, int>(p => p.BookId, b => b.Id))

.UseRule(new ForeignKeyRule<BookPermissionEntity, GroupEntity, int>(p => p.GroupId, g => g.GroupId))

.UseRule(new InsideOutForeignKeyRule<BookPermissionEntity, GroupUserEntity, int>(p => p.GroupId, ug => ug.GroupId))

.UseRule(new ForeignKeyRule<GroupUserEntity, UserEntity, int>(ug => ug.UserId, u => u.Id))

.UseGroupForLastRule(singleUserGroupProvider)

.UseRule(new ForeignKeyRule<BookEntity, UserEntity, int>(b => b.AuthorId, u => u.Id))

.UseGroupForLastRule(singleUserGroupProvider)

.UseRule(new DataSetterRule<UserEntity>(u => u.UserName = "MyUser1"))

.UseRule(new UniqueSetterRule<BookEntity>(b => b.Title))

.UseRule(new DataSetterRule<GroupEntity>(g => g.Name = "Name"))

.UseDataSetterRule(p => p.Permission = 1)

// Remember the existing set of rules

.PushRuleSet()

// Add new rules and create entities

.UseRule(new DataSetterRule<GroupUserEntity>(ug => ug.Level = 1))

.CreateSingle()

// Roll back and remember again

.PopRuleSet()

.PushRuleSet()

// Repeat this for another data set

.UseRule(new DataSetterRule<GroupUserEntity>(ug => ug.Level = 2))

.CreateSingle().PopRuleSet()

.InsertAll();

With this linear approach, we can hardly understand what refers to what. Extra indentation does help, but only until the code gets auto formatted.

As a result, I took the following decision. Previously, we had to explicitly create a new builder instance for each entity and continue working with a linear chain of methods; now, we abandoned this approach and switched to a set of configuration functions.

Previously, one was unable to do without the following:

DataHelper.CreateBuilder<UserEntity>()

// Use rules and creations

.InsertAll();

Currently, it is only about calling a single method:

CreateBuilderAndInsert(

// Use rules and creations

);

I also wanted to simplify the matters for developers and implement tips, grouping rules and logic using the language syntax. This is why I split the logic into multiple interfaces, the core ones being:

- IScopedEntityBuilder that provides access to entity creation, grouping, and default rule setting methods.

- INestedEntityBuilder, which enables defining the rules for entities

Both interfaces are abstract and allow one to create rules for any type of entity; they also enable simplifying the code by providing a variation for a specific entity.

Example 1. Simple entity creation, when the rules are set only for one entity; adding rules for other entities, as well as creating totally different ones, will not work:

var storage = BuildAndInsert<UserEntity>(b => b

.CreateMany(5, nb => nb

.SetUnique(user => user.UserName, 32)

.SetData(user => user.IsDeleted = false)

)

);

Example 2. Configuring multiple entities:

var storage = BuildAndInsert(b => b

// Specify what we are going to create and which set of rules we want to use

.CreateMany<UserEntity>(5, nb => nb

.SetUnique<UserEntity>(user => user.UserName, 32)

.SetUnique<BookEntity>(book => book.Title)

.UseInsideOutForeignKey<UserEntity, BookEntity, int>(user => user.Id, book => book.AuthorId)

)

);

This is a nice-looking example but, in case you have more rules for various entities, this might also look strange, with the data being mixed. This means, one has to limit the data definition areas, like this:

var storage = BuildAndInsert(b => b

.CreateMany<UserEntity>(5, nb => nb

// Group rules for the user entity only

.For<UserEntity>(s => s

.SetUnique(user => user.UserName, 32)

.SetData(user => user.IsDeleted, false)

)

// Group rules for the book entity only

.For<BookEntity>(s => s

.SetUnique(book => book.Title)

.SetUnique(book => book.ISBN)

)

// Link the entities in the common generation rule flow

.UseInsideOutForeignKey<UserEntity, BookEntity, int>(user => user.Id, book => book.AuthorId)

)

);

Now you can see the rules have been grouped, and the compiler will not allow the developer to mix them. This makes the tests easier to read, while in case you need to expand the code, you will see where to add new rules with no issues.

Example 3. Rule visibility areas.

BuildAndInsert(b => b

// With SetDefault, one can determine the general rules to be used for all entities

.SetDefault(nb => nb

.UseForeignKey<BookPermissionEntity, BookEntity, int>(p => p.BookId, book => book.Id)

.UseForeignKey<BookPermissionEntity, GroupEntity, int>(p => p.GroupId, g => g.GroupId)

.UseInsideOutForeignKey<BookPermissionEntity, GroupUserEntity, int>(p => p.GroupId, gu => gu.GroupId)

.UseForeignKey<GroupUserEntity, UserEntity, int>(gu => gu.UserId, u => u.Id)

.UseGroupForLastRule(singleUserGroupProvider)

.UseForeignKey<BookEntity, UserEntity, int>(book => book.AuthorId, u => u.Id)

.UseGroupForLastRule(singleUserGroupProvider)

.SetData<GroupEntity>(g => g.Name = "Name")

.SetData<UserEntity>(u => u.UserName = "Username1")

.SetUnique<BookEntity>(book => book.Title)

)

// With an additional and optional creation method parameter, one may specify additional rules that will be valid for this action only

.CreateSingle<BookPermissionEntity>(nb => nb

.SetData<GroupUserEntity>(gu => gu.Level = 1)

)

.CreateSingle<BookPermissionEntity>(nb => nb

.SetData<GroupUserEntity>(gu => gu.Level = 2)

)

);

This makes the generation code easier to understand and, which is even more important, the compiler and the IDE may give hints on where to add rules.

Conclusion

As a result, the library is constantly getting better, helping detect the most unusual and strange errors when writing complex SQL queries, covering them with multiple data combinations. In particular, the library helped test and debug unexpected issues when migrating from older MySQL versions to MariaDB. On one of the projects, we already have around 1,000 tests, all of them getting complete in four minutes. With the database structure being complex, I believe this is quite a decent result.